Context

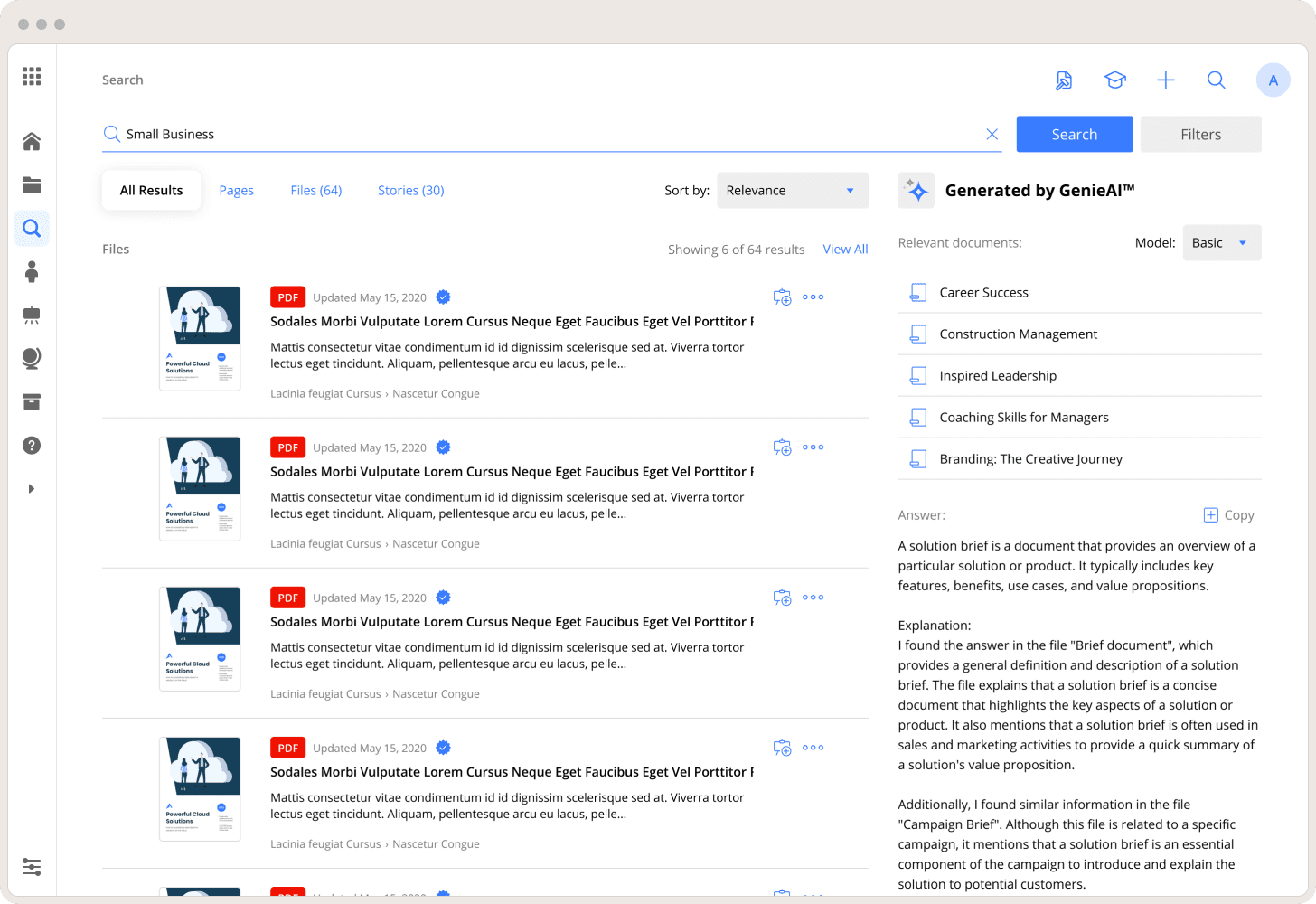

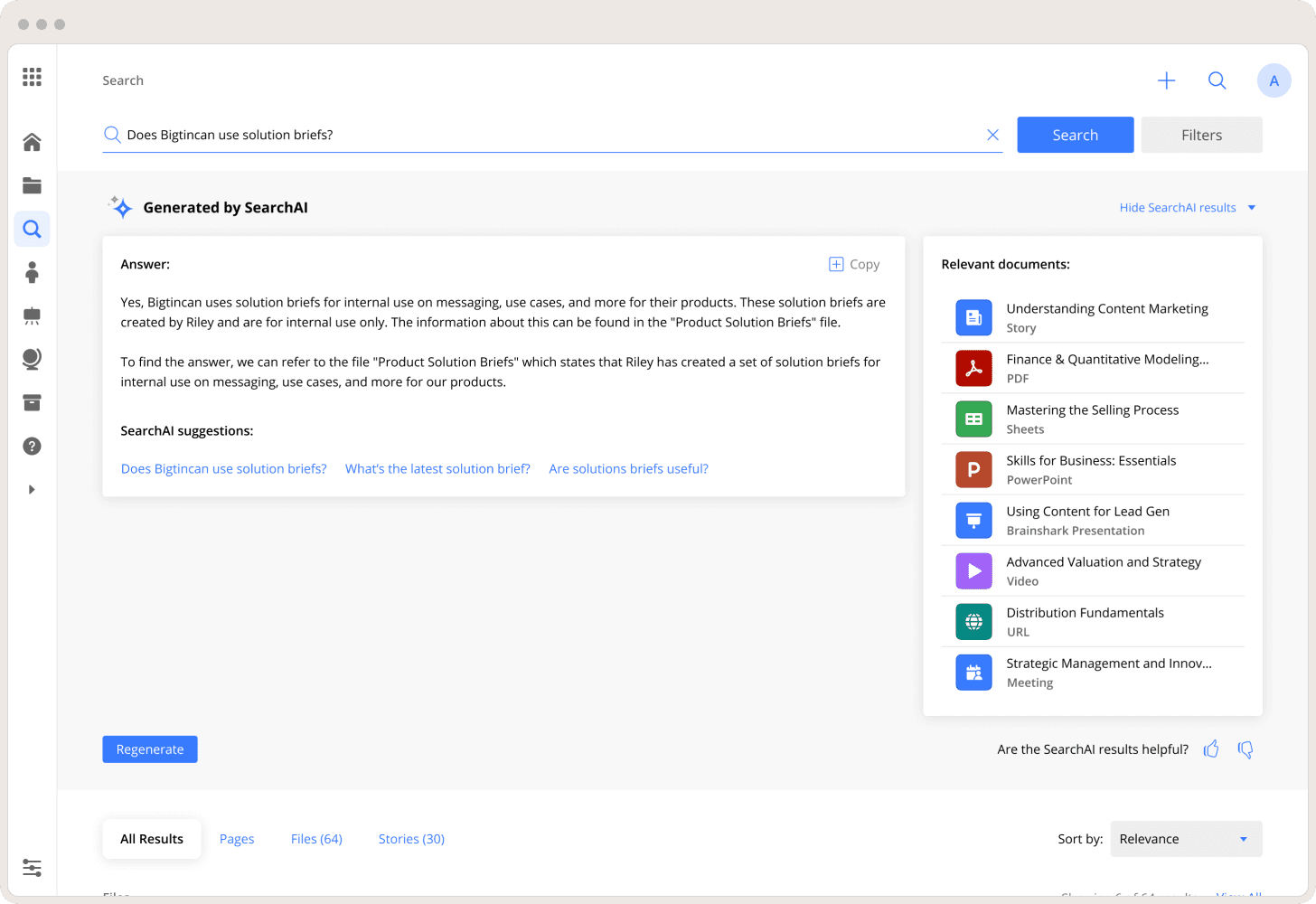

By 2023, AI was table stakes in enterprise SaaS — but only when it was accurate, explainable, and trustworthy.

At Bigtincan, AI directly impacted:

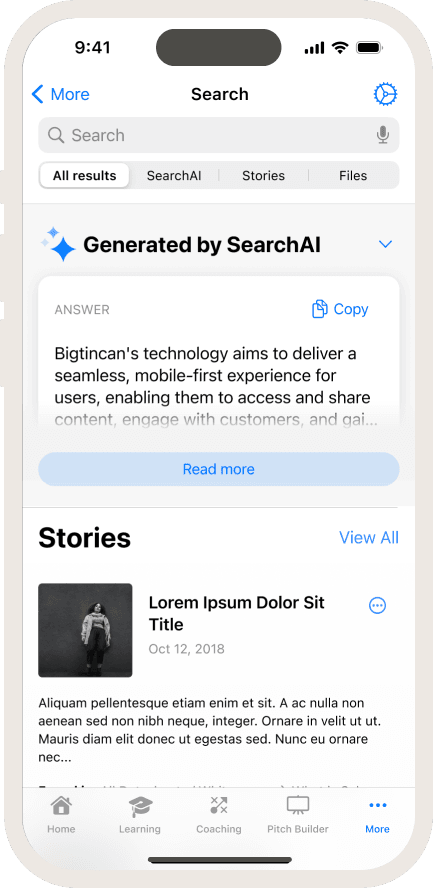

Content discovery

Sales productivity

Platform trust

When it worked, adoption accelerated.

When it failed, trust collapsed.